Statistical Risk Factors

As IFR pilots, we know that general aviation carries inherent risks. Sure, if we hide behind the stellar record of the airlines, general aviation looks safe, but our accident rate…

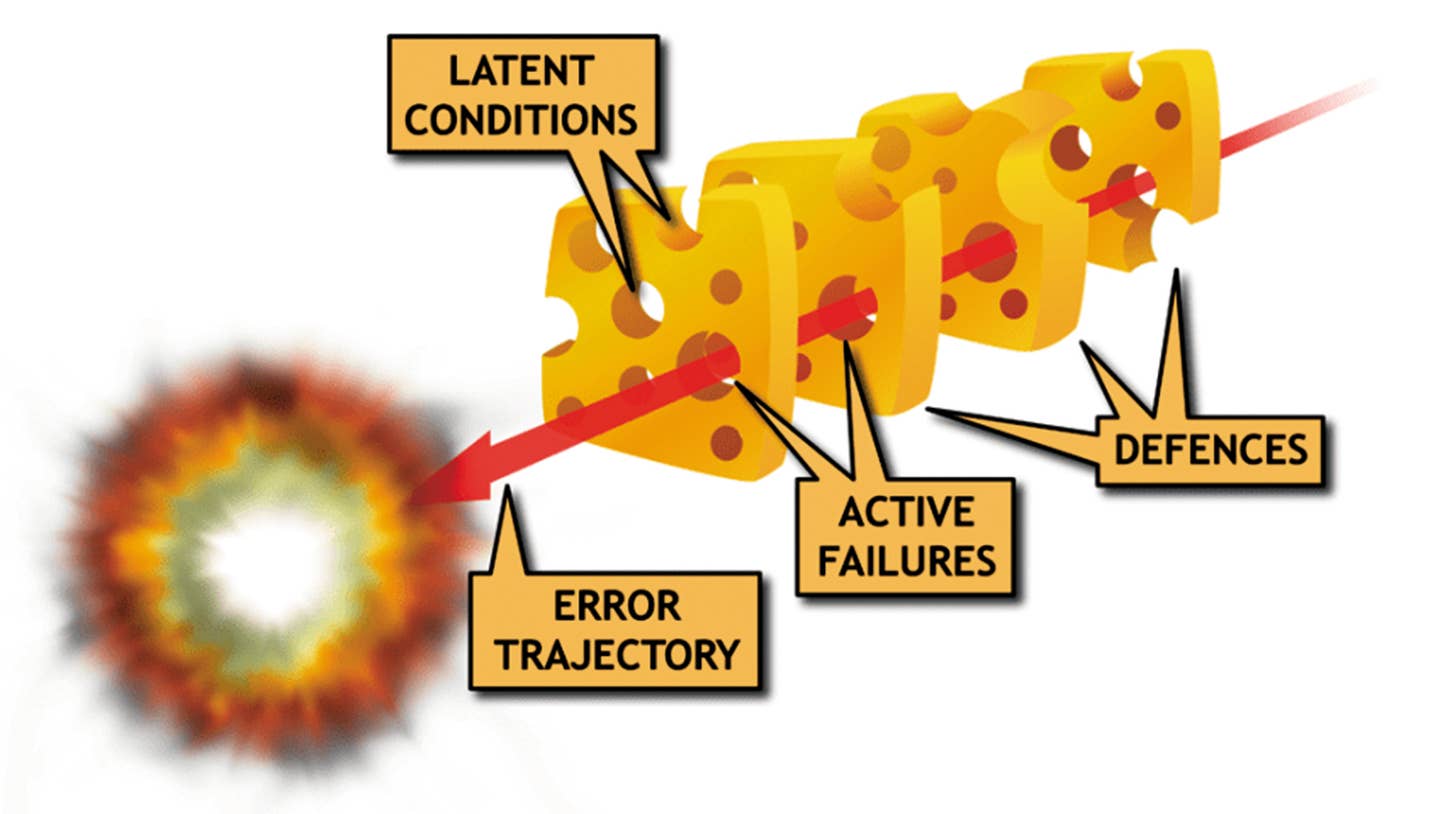

The Swiss Cheese Model illustrates graphically how latent conditions in a series of defenses can channel active failures to produce an undesirable result.

As IFR pilots, we know that general aviation carries inherent risks. Sure, if we hide behind the stellar record of the airlines, general aviation looks safe, but our accident rate is many times higher than the airlines. Of course, most of us do our best to avoid gracing the pages of an NTSB report by constantly adding to our knowledge base. We might devour the latest FAA, NTSB articles/reports commonly announced in weekly AVweb or AOPA’s Aviation-e-Brief e-letters—click on the hyperlink and the entire report is at one’s fingertips.

That said, there’s a host of publications on GA safety that most pilots are unaware of—peer-reviewed scientific journals. Poring over any of these articles written in what might seem a foreign language (not to mention incomprehensible statistical tests) would likely put many of us to sleep.

However, those snoozer statistics serve a purpose—telling us, for example, if a decrease/increase in accident rates over time is a fluke or likely true. As a decidedly nerdy scientist (and active pilot) who studies general aviation safety it’s incumbent on me to keep abreast of this literature. In this two-part series I’ll “translate” into lay vernacular a few of these scientific studies relevant to the IFR pilot on a cross-country—rather than the $100 hamburger jaunt in CAVU weather.

Before I start, one logical question is whether such academic work is of practical value for the IFR pilot and if so how? While some research is ethereal and of limited practical benefit, often such endeavors (undertaken either by the FAA/ NTSB or academics) have laid the foundation for some general aviation related FARs. Other research has provided guidance for some of the metrics (e.g. the recommended distance from thunderstorms) or has amplified on FAA pronouncements.

Human Factors and “Situation-Aversion”

For general aviation accidents the warm body occupying the front left seat is the weak link with 84-percent of mishaps attributed to the pilot rather than equipment failure. In contrast, for our air carrier siblings, that value drops to 38-percent. With that in mind, let’s start off by cogitating (no pun intended) a bit on the flying-related thought process and not too stellar performance of our low-end computer-like brain when it comes to pilot decision-making.

Of course, we all know about get-there-itis. There’s a whole bunch of drivers (e.g. scheduled event, financial gain) of such behavior and we’ve heard of these ad nauseam so I won’t flog the issue. What I want to discuss is a corollary to this phenomenon for which one research group coined the term “situation-aversion.” This stemmed from their study in which 21 highly experienced pilots (boasting ~5000 hours total time and most holding either ATP or commercial tickets) were interviewed on unsafe flying behaviors for a prior flight(s).

What is situation-aversion? Suffice to say that these are circumstances that motivate pilots (en route) away from safe behavior. Some examples would be bypassing closer airports upon encountering adverse weather or after experiencing an equipment malfunction, because such aerodromes lacked a maintenance facility or lodging accommodations—each of these were reported by some of the pilots interviewed. Many of us have encountered a similar situation. Most often (e.g. burning smell) it’s more important to put the plane down ASAP notwithstanding any subsequent inconveniences/ hardships/embarrassments.

Pilot Behavior and Adverse Weather

Still on the topic of decision-making for the general aviation pilot, another study showed that our mental processes are in a state of flux (and not necessarily favoring safety) as the flight progresses. This study sifted through 491 general aviation fixed-wing “occurrences” in Australia (the vast majority with private pilots) related to dealing with en route adverse weather (VMC to IMC). What’s an “occurrence?”

This side of the Pacific, we might think this is an “accident” or “incident” per the NTSB definitions. But that would be wrong in this instance. This term also included what the Australian authorities regarded as a safety deficiency (with no aircraft damage and no individual hurt) in addition to the incident or accidents with which we’re well familiar. In fact, for the Australian study most of the “occurrences” did not involve a bent airplane.

Sure, these were all VFR flights but remember there’s a bunch of data out there showing that it’s not just the VFR pilot who gets snared in VMC-IMC. Holding an IFR ticket does not equate to proficiency—flying single-pilot in the southeast or southwest USA one can get rusty quick. You may be legal but almost certainly not proficient. What’s the bottom line of the study? Well, interestingly, the researchers found that the mid-point (distance-wise) of the flight could be thought of as a psychological turning point for the pilot. As the flight progressed, the chances of a VMC-IMC encounter steadily increased reaching its zenith during the last 20-percent of the flight distance.

On the other hand, pilots were most likely to avoid penetrating IMC if the planned flight distance was less than 40-percent completed. That’s not the only study to show such pilot behavior. An earlier publication looking at 77 weather-related, cross-country general aviation accidents (New Zealand) found that such mishaps were more likely to occur at greater distances (>50 miles) into the flight—the closer we are to the destination the more likely we are to push on. Resist the urge. We’ll see more of this in the second part of this article.

Complacency and Flight Safety

Another aspect of human factors during flight is the risk of complacency. Let’s look at its effect on controlled flight into terrain (CFIT) accidents. CFIT is deadly—most of such mishaps are fatal. A hot-off-the-press (2019) article investigated the causes/factors for 50 CFIT accidents. Of these, just under half were during the approach phase with a similar count for the en route segment—the few remaining occurred during departure. Admittedly, this was a hodgepodge of accidents including not only general aviation but commercial and military aviation too. They used a rigorous and standardized procedure (Human Factors and Classification System—abbreviated to HFACS) for their accident analysis.

HFACS was originally developed in 2001 by Dr. Shappell and Dr. Wiegmann to apply the “Swiss Cheese Model,” with which you may be familiar, in a practical manner, to aviation accident analysis. It likens human systems to multiple slices of swiss cheese, stacked side by side, in which the risk of a threat becoming a reality is mitigated by the differing layers and types of defenses which are “layered” behind each other.

Therefore, in theory, lapses and weaknesses in one defense don’t allow a risk to materialize, since other defenses also exist, to prevent a single point of failure.

It was adapted and used by the military for their mishaps’ investigations. While a detailed description of this tool is beyond our scope, suffice to say that it is broken down into several categories (Unsafe Acts, Supervisory Failures, Organizational Influences) the latter further divvied up into subcategories each containing a bunch of single items (nanocodes) that are then scored for each accident. For all purposes we can ignore the categories/sub-categories and focus more on the bottom lines, which the IFR pilot can relate to i.e. the “nanocodes.”

So how were pilots involved in CFIT accidents snared? The nanocode “pilot complacency” scored frequently (30 of the 50 CFIT accidents). That’s not good—while pilot experience benefits safety it can also bring about complacency, which has the opposite effect. Don’t get lulled into this mindset even for routes you’ve flown many times—there are a litany of such accidents in the NTSB database. Perhaps less surprising was poor mission planning, another big zinger, scored for 38 of the 50 accidents. Again, that’s relevant to the IFR pilot often using the aircraft for cross-country trips, which entails much more planning than for a spin around the local patch or a short hamburger hop.

Summing Up

In this article I’ve focused on some human elements that can put the IFR pilot at risk for an accident. In the next installment I’ll put some numbers to the various risk factors for a fatal accident to give the reader a feel for the extent to which he/she is flirting with danger. I’ll also discuss how one of the FAA metrics is grounded in scientific basis rather than being arbitrarily picked out of a hat.

Finally, realizing that “stuff happens” even to the best of pilots (always remember that the typical light aircraft has minimal redundant systems nor is it required to per FAR Part 23 certification), I’ll go into some detail as how best to reduce the chance of invoking a life insurance policy in an accident that otherwise should be survivable.

Part 2 Coming Soon

Douglas Boyd Ph.D. is a research Professor at ERAU-Worldwide and a Commercial, SMEL and instrument-rated pilot.

This article originally appeared in the January 2020 issue of IFR Refresher magazine.

For more great content like this, subscribe to IFR Refresher!