When considering the industry’s take on risk management, one of the arrows in the quiver is something called personal minimums. One idea behind personal minimums is that the FAA’s regulations are minimum standards—we’re free to exceed them: If VFR requires three miles and 1000 feet, why not bump that up to five miles and 2000 feet, at least when we’re inexperienced? An ILS takes us down to 200 feet AGL, but as we reach decision height, the localizer and glideslope are much narrower, and can require greater finesse. So how about adopting 500 feet instead? Aren’t these personal standards less risky? Maybe.

Adopting greater-than-required standards can be a good way for a less-experienced pilot to resolve various dilemmas arising from the knowledge he or she isn’t up to the task—whether it’s low ceilings, stiff crosswinds or fuel requirements. One reason is that human nature often results in our wanting a specific, objective standard against which to measure performance. We typically want to reduce every decision to a greater-than or less-than equation. But just changing the numbers we fly by may not be the best way to go about reducing risk, which is the objective, isn’t it?

Calculating Pilots

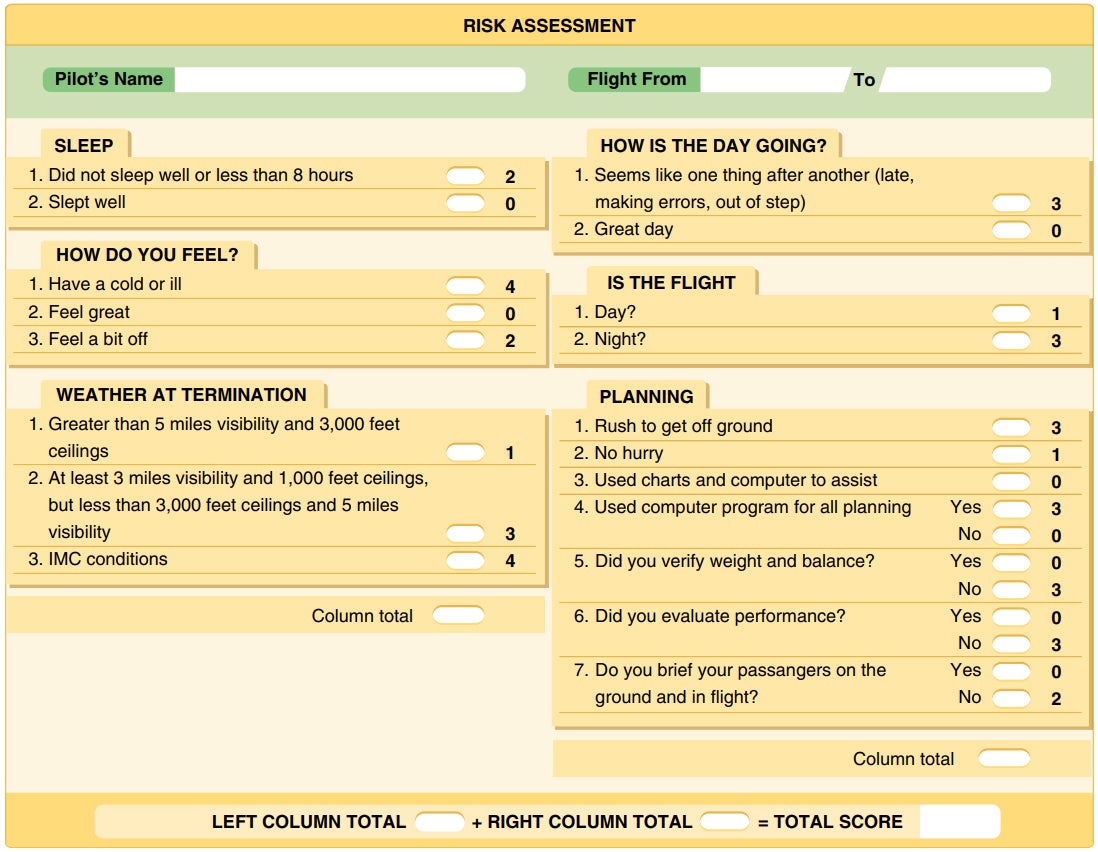

Much of the FAA’s approach to teaching risk management concepts assigns values—even color coding—to various aspects of planning and executing a flight. The basic idea seems to be that a decision reached empirically is more effective than one reached through some other means. The implication is that pilots can’t properly weigh likelihoods and outcome severities without math. There’s some logic to that.

It flows from the finite, measured nature of aviation. We often think in terms of time, distance, altitude, weight and a host of other factors often best described using numbers. We don’t, for example, label a crosswind component as mild, stiff or really stiff; instead, we assign it a value, measured in knots and angle to the runway. Given that numbers often are what we use to gauge aircraft performance, it’s natural for us to want to apply the same basic math to risk management and aeronautical decision-making. The problem is that many of the factors we must consider to minimize risk don’t easily lend themselves to numerical expression.

Take pilot fatigue. We know how much sleep we got last night, and at least have an estimate for when we’ll arrive at our destination, but it’s impossible to assign a value to the extent, if any, to which we won’t be performing at 100 percent of our capabilities. Will we be at 90 percent? Fifty? How much is enough to see us through the approach to FAA minimums at the end of the trip? How much is too little?

The problem is that much of the FAA/industry’s approach to risk management is that it requires quantifying that which often doesn’t lend itself to quantification. Still, the associated guidance does an admirable job of trying to get to that conclusion. It’s just that the act of assigning a value to something that has no objective number associated with it isn’t an exact science. And, as discussed, aviation is an endeavor frequently demanding precision.

As an aside, let me add that if this kind of calculation works for you, by all means go ahead and use it. I don’t advocate that pilots ignore risk management in their flying; far from it. I and others I know well use these concepts on every flight; we just don’t try to apply numbers to them. The human tendency to minimize a risk’s impact means the value assigned can and will be adjusted so that the sum of the various factors conveniently falls below the threshold at which mitigations should be implemented.

The Value Of Scenarios

I think recognition of the difficulty in assigning objective, numeric values to subjective factors is one reason scenario-based risk management training was developed. As contributor Robert Wright has written many times in these pages, the FAA’s now-implemented airmen certification standards (ACS) are designed, in large part, to integrate risk management concepts into the practical tests for pilot certificates. Although the phrase “personal minimums” does appear in the Private Pilot ACS, there aren’t any suggested standards for what they should be.

Instead, the Private ACS has this to say: “…the evaluator will assess the applicant’s understanding by providing a scenario that requires the applicant to appropriately apply and/or correlate knowledge, experience, and information to the circumstances of the given scenario. The flight portion of the practical test requires the applicant to demonstrate knowledge, risk management, flight proficiency, and operational skill in accordance with the ACS.” Risk management is an element of each task in the Private ACS, at least as far as we can tell, but nowhere can we find where it includes specific recommendations to increase ILS minimums, for example, by a certain value to minimize risk.

Teaching risk-management concepts through scenario-based training seems to me to be far superior to creating personal minimums. Although scenarios can be too outlandish—instructors and evaluators need to keep it real—their value is less about assessing the risk of one extreme than it is when several abnormal conditions are combined into a single set of circumstances. The goal is to get the pilot-in-training to consider a set of less-than-optimal circumstances and apply critical thinking to the problem. To me, this is far superior to adding up a list of factors to which are assigned often-arbitrary values. Then, when the sum exceeds a certain threshold, canceling the flight outright. The basic necessity of assigning values is the problem.

Critical Thinking

To me, the value of scenario-based training is that it stimulates the pilot to think critically about the combination of factors—there’s never just one, by itself—he or she must confront on the planned flight. Rather than assigning a set of values to those factors and rethinking things when the total number rings a bell, we need to emphasize there are a series of choices and decisions we must make before we take off and throughout the flight.

For example, making a lengthy diversion around convective weather will impact the fuel available at our planned destination. Since the chance of missing the approach and needing to go to the alternate may be very real, and the fuel we used for the diversion might come in handy, the concept of stopping en route to top off should arise, not necessarily the idea that the weather is too bad to continue to the planned destination (although it may be, given the available fuel). Same thing for a stronger-than-forecast headwind or a fuel transfer pump suddenly announcing it’s past its sell-by date.

Importantly, diverting around the convective weather and then stopping for fuel isn’t the only “correct” response in this scenario. We also can pull up just short of the weather, secure the airplane and wait out the storm, and then launch for the destination with sufficient fuel, as planned. For that matter, there is no correct response to such a scenario, only success and failure.

Conventional application of personal minimums—I need to have an hour of fuel aboard at the original destination’s final approach fix, for example—addresses this need but can omit the option of stopping for more gas. If the option of landing and topping off the tanks is a reflex, it’s not likely to be omitted in this scenario.

Some might call this “thinking outside the box,” but it should be reflexive.

Options Are Good

“Hope is not a plan.” “No plan survives first contact with the enemy.” You can probably think of other phrases expressing the concept that no matter how much we want our present task to succeed, and no matter how good our original plan may be, the real world often has other ideas and can transform all our planning into a quivering heap on the cockpit floor. How we respond to that challenge is the ultimate determination of how risky a proposed operation is. Should you plan for Plan A to fail miserably and add Plans B and C to your quiver of arrows? Absolutely. But each scenario is different, and coming up with rules that work in every situation is the hard part.

The bottom line in all this, at least to me, is having options. For you coders, it’s a classic if/then statement. Personal minimums are about defining the statement’s first clause, the “if,” while what follows often hasn’t been defined. For example, what if a parameter drops below your personal minimum, how will you respond? If your calculations show you’ll have five minutes less fuel than your minimum at your destination, is that grounds for stopping en route to top the tanks? Maybe, if the destination is IMC and there’s a chance you’ll miss. Probably not if it’s good VFR all the way.

Maybe you’ve got a 1000-and-three personal minimum for a non-precision approach with a 450-foot MDA. The AWOS is advertising 900 broken with 10 miles at the surface and benign winds. Will that missing 100 feet force you to divert? Or you’re trying to land on home plate’s 5000-foot runway as the winds are giving you a direct, steady crosswind at 15 knots while you’ve sworn you’ll never fly your Skyhawk in more than 12. Will that extra three knots force you to go somewhere else?

A well-trained and experienced pilot wouldn’t think too much about these scenarios. The missing 100 feet on the approach takes you slightly lower than pattern altitude but still above MDA; proper procedure is what really determines if an instrument approach is successful, anyway. The greater visibility underneath should make the approach simple, especially if you can get down early. The extra three knots on the crosswind landing, meanwhile, is a learning opportunity, not an emergency.

My bottom line? Personal minimums are a personal choice. The trick is what happens when the weather turns out a bit lower than you like, or the wind picks up a knot or three and you can’t get home without busting your own minimums. What will you do? Fly the airplane, then revise your personal minimums on the ground.

When To Be Precise

There obviously are times when numbers inform us of an unsafe condition. If an airplane needs 2000 feet of runway to get down and stopped, it’s foolhardy to plan on using one offering only 1500 feet. Whether to land on the 1500-foot-long runway isn’t a matter of risk management; it’s foolhardy. Planning a four-hour flight with only three hours of fuel aboard is just as hopeless.

Somewhere between the extreme of flying to fuel exhaustion or rolling off the end of the runway, and stopping for more gas or choosing a longer runway, is the empty void risk management and aeronautical decision making were created to fill. One question is how to assess that void, and assigning numerical values to various factors and outcomes is the best industry has been able to come up with.

If You Must…A Template

If you’re contemplating a set of personal minimums anyway, or are looking to update what you already have, you may want to ensure it at least covers the following basic elements.

Recent Experience

It’s perfectly reasonable to be more cautious when you’ve not been in the left seat for some time. Get out and fly, and consider yourself a student once again until you’re comfortable.

Fuel

The FAA’s minimum fuel requirements for VFR are…minimal. Even seasoned pilots typically increase them to an hour, and pad the IFR fuel requirements, too. We want an hour in the tanks when parked at the destination.

Low Weather

VFR-only pilots will treat this differently from the instrument-rated. But in both cases tackling low weather is best done with some experience. Find an instructor willing to teach it.

Wind

Get some practice with a good instructor on crosswinds and gusts. Keep in mind that more runway makes dynamic winds easier to handle. Don’t forget that you can usually go to a nearby airport with different runway alignments, both to practice and to get down in a pinch.

Jeb Burnside is the editor-in-chief of Aviation Safety magazine. He’s an airline transport pilot who owns a Beechcraft Debonair, plus the expensive half of an Aeronca 7CCM Champ.

This article originally appeared in the July 2021 issue of Aviation Safety magazine.

For more great content like this, subscribe to Aviation Safety!

My considerations weigh compounding factors and data quality…is the ceiling cleanly defined with unlimited visibility below or is there a “few or scattered” layer of fuzzballs below, or scuz hanging out the bottom or visibility impeded by fog/rain/mist? Do I have one divert in range in a sea of red on the chart or are there plenty of easy VMC diverts along the route? Dewpoint spread/trend/daylight waxing/waning? Number of weather observations along the route? Is that water I’m flying over bathwater or icewater? How dark a night? How bumpy, gusty, shearing? Need those readers to see the plate? Sitting next to a CFII or empty/unqualified seat? Known good airplane or not recently flown or rental hack? Fuzzy edges to the metrics make a difference too.

The PP ACS actually does explicitly mention personal minimums (task I H, skill 2) “Perform self-assessment, including fitness for flight and personal minimums, for actual flight or a scenario given by the evaluator”. I provide the applicant an overall scenario, add details such as “On the day of the flight, you forget to ________; how does that affect your go/no go decision?” and that way see whether the prospective pilot applies the risk mitigation element that I’ve selected for that task (risk 2, hazardous attitudes, for example). Another example is “You are approaching destination and ATIS reports windshear; is that a problem? what are your options?”

The point is that by adhering to the ACS, examiners are tasked with continuously probing the applicant for chinks in their stated personal minimums armor, whether they’re just numbers or something more subtle. This means, therefore, that CFIs should do the same in order to properly prepare their students for a test.

Big iron operations follow rules on reserve fuel, which is both tolerance for unexpected enroute and for moving on to an alternate airport if unable to land after one or two approaches.

Qantas illustrated rigor one day approaching London England.

Unexpected ATC delays motivated crew to ask ATC for expedited handling to avoid burning into fuel reserves.

ATC asked if they were declaring a fuel emergency, crew responded ‘Qantas 123 is declaring a fuel emergency.’

Crew/Qantas policy was to not burn into reserves.

Get on the ground rather than waste time debating and taking risk of further fiddling around by ATC.

I’m a couple of weeks away from my practical exam so forgive me if I’m missing something…

From your article: “My bottom line? Personal minimums are a personal choice. The trick is what happens when the weather turns out a bit lower than you like, or the wind picks up a knot or three and you can’t get home without busting your own minimums. What will you do? Fly the airplane, then revise your personal minimums on the ground.”

Are you really saying that if you can’t meet your minimums to ignore them and then revise them to match what you did later (assuming you survive)?

I understand that personal minimums ignore the bigger picture. However I do see value in trying to keep track of where the line is between a situation you know you can handle and one that you’ve never faced before.

Perhaps I will decide that it’s ok to go ahead with a particular flight below minimums. Perhaps I will then modify my minimums based on that flight experience. At least my minimums served as a flag for me to examine my plans carefully.

I think scenario based training is great for all the reasons you listed but I don’t see that as being in conflict with personal minimums.